Environmental Performance Auditing in Australia, Canada, India

by Awadhesh Prasad, Australian National University, Canberra, Australia

Environmental performance auditing in Australia, Canada and India was studied as part of a three-component doctoral research. Other components included a global trend analysis and an investigation of current practices in environmental performance auditing. Following a brief examination of the mandate and institutional arrangements of the Supreme Audit Institutions (SAIs) of Australia, Canada and India and the study methodology, this article provides an overview of results and emerging issues.

MANDATE AND INSTITUTIONAL ARRANGEMENTS

Having a common British heritage, the Australian, Canadian and Indian SAIs are similar in many respects. They are independent statutory institutions—not subject to any directions when selecting performance audit topics and methods employed to audit them; and they have full power to call witnesses and documents. Through the public accounts or other relevant committees, they report their findings to parliament and assist in holding the executive government accountable. These institutions, however, do have some notable differences. With few exceptions, the Canadian and Australian SAIs have only national jurisdiction, whereas the Indian SAI’s jurisdiction covers all three levels of government.

Unlike its Australian and Indian peers, the Canadian SAI has a specific mandate to audit environmental matters. The Commissioner of the Environment and Sustainable Development, a statutory official with a dedicated workforce within the Canadian SAI, undertakes this function. The institutional arrangements of the three SAIs reflect their respective mandates. While Australian (1 office, 350 staff) and Canadian (5 offices, 600 staff) SAIs have a few offices and moderate staff strength, the Indian SAI is quite large with 48,000 staff in 141 offices.

METHODOLOGY

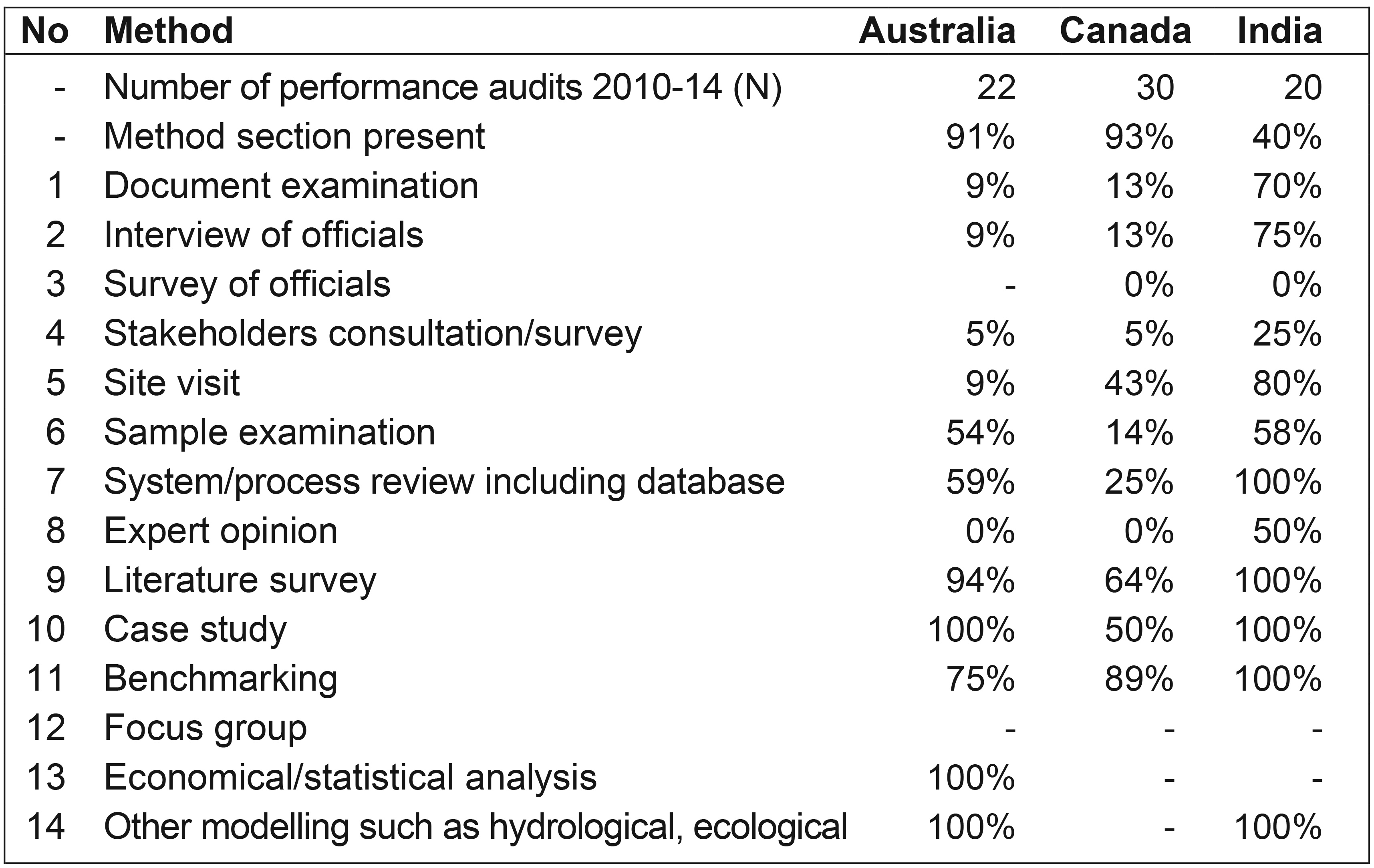

The study of the three SAIs included their enabling statutes, performance audit standards, manuals and guidelines, as well as environmental performance audit reports (Australia: 22; Canada: 30 and India: 20; Total 72) for a five-year period, 2010-2014. The key attributes of performance audits, such as audit objectives and criteria, methods for evidence gathering, argumentation and reporting style along with audit recommendations were recorded and analyzed. The observations from the content analysis presented ‘what is’. This was compared with the relevant requirements of SAIs’ standards, manuals and guidelines presenting “what should be.”

RESULTS AND DISCUSSION

Audit Planning and Quality Control Framework—Good Practices. The three SAIs’ audit planning and Quality Management Systems (QMS) are considered either sound by peers (Australia: Wilson, 2011; Canada: Australia et al., 2010) or adequate but needing enhancement (India: Australia et al., 2012).

The Australian and Canadian performance audit standards (and manuals) are obligatory, while the Indian standards (and manuals) are discretionary. Likewise, the Australian and Canadian performance audit reporting standards are highly prescriptive compared with the Indian reporting standards that barely specify any content requirement.

The three SAIs use risk-based analysis and employ long-term strategic planning for selecting performance audit topics. The Australian “blue book” preparation and Canadian “one pass planning” are quite similar in that they produce rolling lists of potential performance audit topics that are annually reviewed. In a slightly different approach, the Indian SAI’s long-term strategic plan and medium-term perspective plan guide annual audit planning. The Indian SAI has established Audit Advisory Boards for the headquarters office and regional audit offices that comprise eminent persons and highly qualified professionals from diverse fields to assist in audit planning.

Notwithstanding their differences, all three SAIs have exhibited notable practices in planning and undertaking performance audits. For example, the SAI of Australia publishes its “blue book,” the finalized annual audit work program, on its website informing all interested parties, including the public, and it provides opportunities for stakeholders to make contributions to every performance audit. The Canadian SAI developed a manual, “4th E Practice Guide,” to integrate environmental considerations in all performance audit work. The Indian SAI created separate “Environment and Climate Change–Auditing Guidelines” to enable more effective environmental audits.

Reporting of Audits: Matters Needing Attention. As the end product of performance audits, the quality of audit reports largely determines the credibility and reputation of SAIs in the eyes of parliament and the public. Consequently, SAIs are very conscious about the presentation of their reports and tend to specify related requirements in their standards/manuals. SAIs are expected to adhere to their standards and produce reports that are consistent in terms of key parameters, such as audit objectives, criteria and alignment between objectives and conclusions.

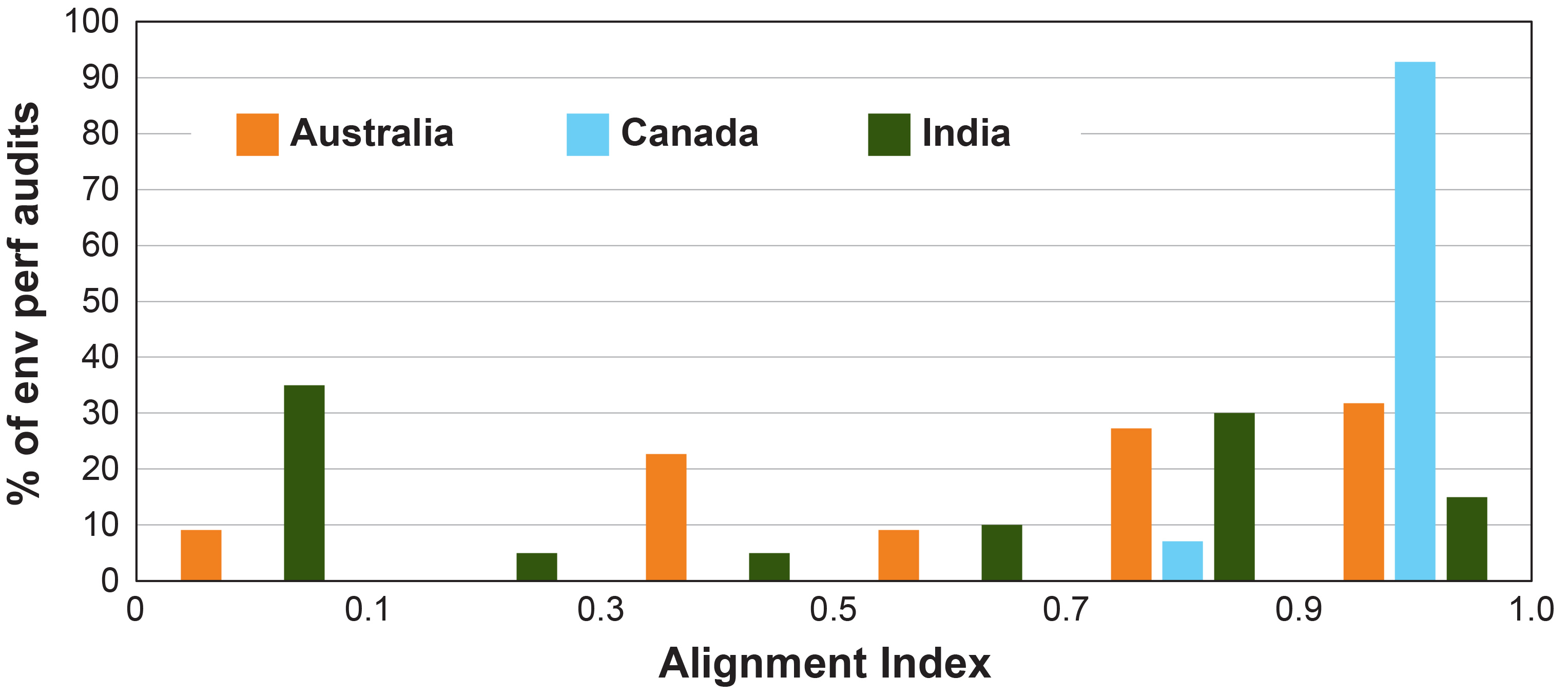

However, this is not always observed, as the reports from the three SAIs showed significant variability. For example, the alignment between audit objectives and conclusions, which are estimated using a rule-based index varying between 0-no alignment and 1-perfect alignment. All three SAIs’ performance audit standards/manuals prescribe that a performance audit must conclude against each of its objectives. However, environmental performance audits of all three SAIs did not always meet this requirement. Only 32% of Australian, 93% of Canadian and 15% of Indian environmental performance audits (Figure 1) achieved an alignment between 0.9-1, meriting them as well-aligned audits, where each objective had a directly or closely matching conclusion. About 10% of Australian and 35% of Indian environmental performance audits (Figure 1) did not align conclusions with their respective objectives at all. In these cases, either the audit objectives were not defined clearly, or the conclusions did not address the stated objectives, or both.

It is recognized that objectives, and hence conclusions, might change during the course of audit. However, in such circumstances, the objectives should be amended, and conclusions made against the new objectives rather than leaving objectives intact and creating a misalignment between conclusions and objectives.

On almost all aspects studied (entirety of results not given due to space constraints), the Indian environmental audit reports, when compared to those of the Australian and Canadian SAIs, illustrated more variability. This could, in part, be attributed to the Indian SAI possessing a wider jurisdiction, complex institutional arrangements, less prescriptive auditing standards and variable quality of supervision. However, though the Australian and Canadian SAIs are characterized as having a much narrower jurisdiction, less complex institution and more prescriptive standards, significant inconsistency was also present in their audit reports. This inconsistency points to some generic underlying causes, one of which could be an opportunity for improved adherence to performance auditing reporting standards.

Deficient and/or Inconsistent Reporting of Methods. The reporting of methods associated with evidence collection received inconsistent attention from the studied SAIs (Table 1) suggesting somewhat lesser importance being attached to it. Roughly 93% of Canadian, 91% of Australian and only 40% of Indian environmental performance audit reports contained a methods section. Further, Canada recognized all methods, yet they reported them variously while Australia (3) and India (5) did not recognize (used but not reported) the use of several methods, including case study, economical/statistical analysis, system/process review, literature survey, benchmarking and modeling.

However, this is not unusual given that these less frequently used methods lack consistent definitions. The creation of a standard definition by INTOSAI on various methods would facilitate a common understanding and minimize interpretational differences.

While non-reporting of uncommon methods by some SAIs is understandable, non-reporting by all three SAIs of all methods on some occasions (even the commonly used methods, such as document examination and interview) indicates a generic gap in documentation and transparency.

SAIs may argue that audit reports are not deficient, since current standards do not mandate the inclusion of such details and primary clients, parliamentarians, appear to show a lack of concern regarding these details. SAIs should lead by example in promoting transparency rather than make arguments inconsistent with this ideal.

Despite the maturity in the field of performance auditing and development of related standards, performance audit, unlike financial audit, is still not considered a routine exercise. Given that every performance audit is a standalone endeavor and the fundamental role methodology plays in reaching conclusions, it would be beneficial to include a methodology section in every performance audit report.

Transparent reporting on each aspect of performance audit (e.g. objectives, criteria, methodology) and ensuring conclusions are aligned with objectives are critical to maintaining SAI reporting credibility. Thus, establishing a reporting standard for performance audits would address this need.

CONCLUSIONS

The results of a comparative study of environmental performance auditing in Australia, Canada and India suggest while the three SAIs have adequate QMS in place, the quality of their reports varies, not just between them, but also within them. Although institutional arrangements, traditions and variable implementation of QMS needing individual SAI’s attention can explain some differences in reporting, improved adherence to performance auditing reporting standards may help to address some of the reporting variations noted.

For more information about this article and a full list of references, email the author at awadhesh.prasad@anu.edu.au.